With the advent of generative AI, AI trainers are emerging as a new form of platform work. Platforms such as ride-hailing service Grab or AI service Outlier act as intermediaries between workers (drivers, AI trainers) and buyers (users, enterprises). Algorithms govern their interactions, matching workers and buyers based on workers’ ratings, profiles, and other metrics. Tasks are also broken down into highly fragmented, atomised assignments, thus easily traded on platforms.

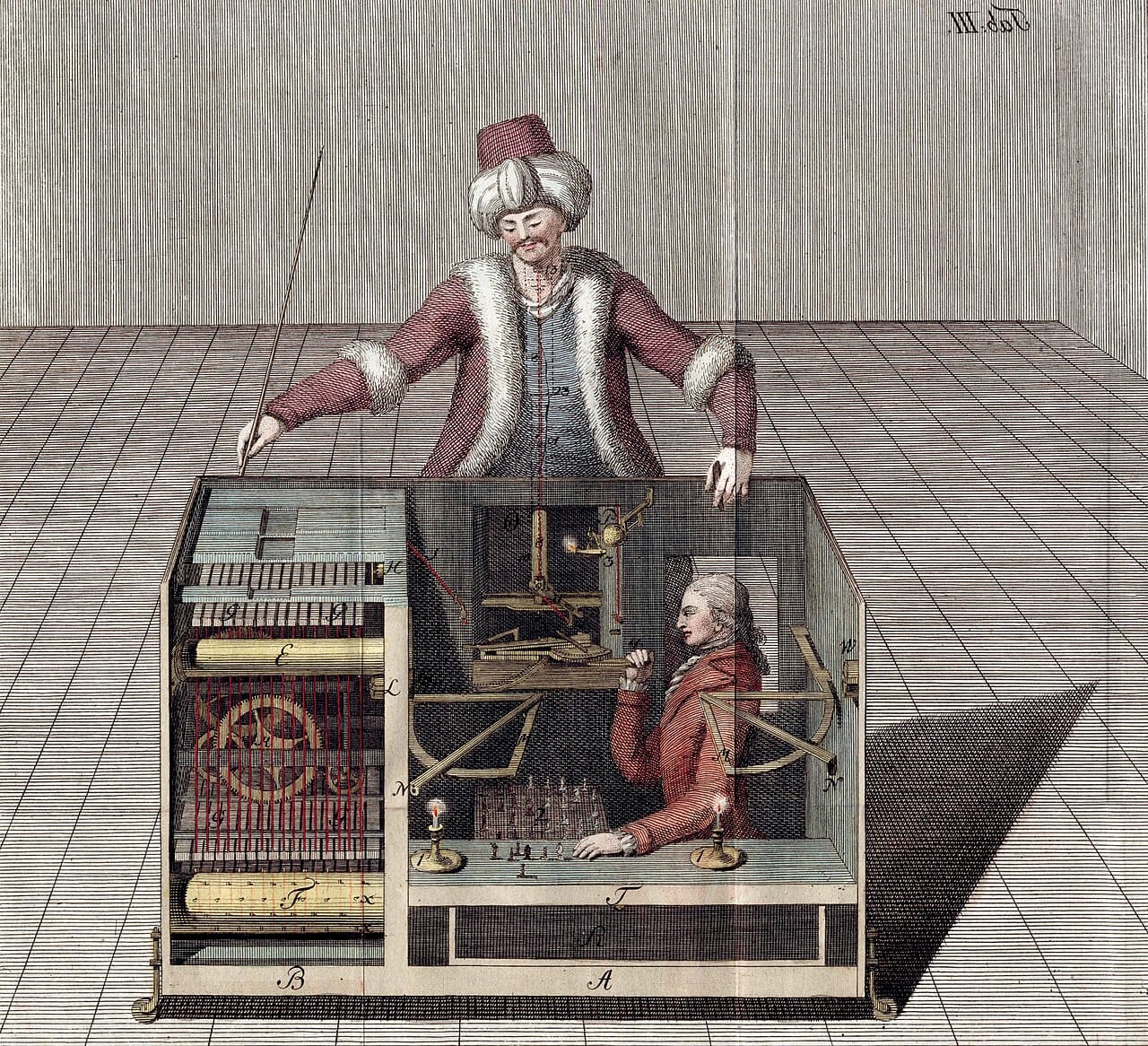

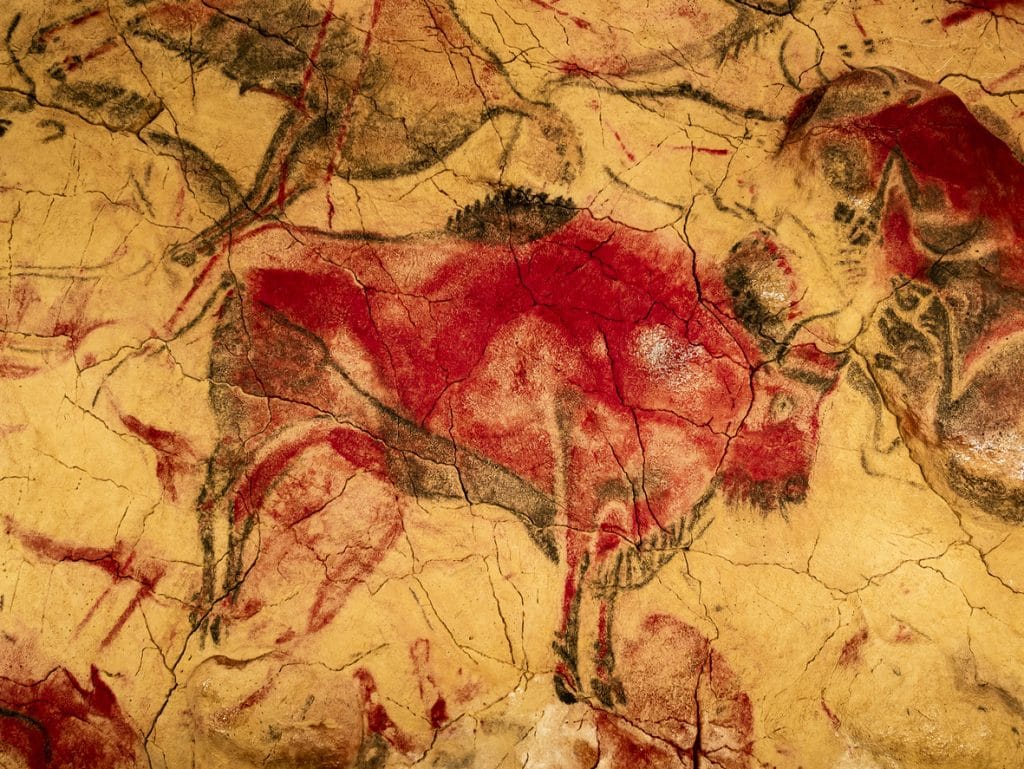

While AI chatbots are marketed as a “magic box”, their seemingly effortless responses mask a more industrial reality. But behind the spectacle, a global network of AI trainers–a hidden class of digital labour–spent hours sorting through large sets of unstructured data; cleaning, labelling, and organising them into high-quality data sets for developers and engineers. Their work is essential in maintaining the accuracy – or the illusion of accuracy – of information presented by AI chatbots.

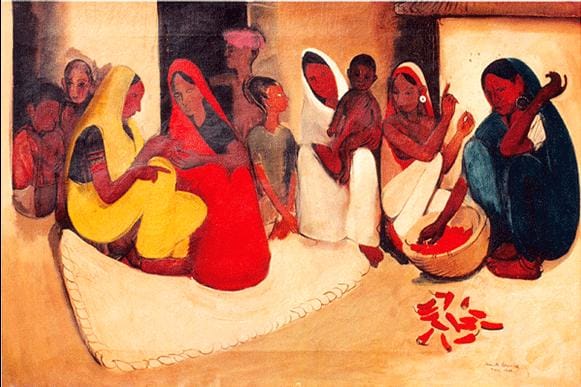

As formal employment diminishes, many Indonesians are turning to platform work as both side hustles and primary jobs. Working for these global platforms remains attractive for those in the Global Majority because of the remuneration in US dollars. But more than the financial uncertainty, AI trainers are facing an obscure system that requires constant deciphering, leaving them feeling isolated and stressed. While AI systems are marketed as automated, AI trainers’ work remains undervalued and invisible labour behind the seemingly automated systems.

The Elusive Flexibility

Digital gig work’s promise of flexibility is elusive. There is a strong sense of uncertainty and isolation among AI trainers, as demand is highly unpredictable. AI trainers often have short contracts and are constantly on the lookout for new projects. Months can go by without any job opportunities, leaving trainers wondering if it’s just a slow season or their visibility is dropping. Trainers can only rely on community or past experiences to assess demand.

AI trainers need to keep an eye out for any job across various platforms at all times. Many trainers have structured their days around job-hunting: they wake up and open the laptop first thing in the morning, check again in the afternoon, and then again at midnight. This habit could go on for weeks without seeing any opportunities. Because trainers are isolated from clients, they can’t actively provide their services. They merely wait. This unpaid time spent searching for projects becomes the precondition of being an AI trainer. When a job appears, they need to accept it immediately, fearing it will be taken by someone else.

In Indonesia, Lili (not her real name), a housewife with four kids, was well aware of this risk and would take as many jobs as possible. She even insisted on working on an assignment in the midst of labour for her fourth child, knowing that these assignments can disappear at any time.

This is far from the promise of flexibility. Workers are given little to no control over their time; becoming an AI trainer is a risky endeavour that can consume their entire day, even though they are not paid.

Other times, an assignment can appear on the dashboard, but it doesn’t disclose how long the project will take to finish. This happened to Reza (not his real name), who ended up working for 16 hours non-stop. “I took breaks only if I finished one of the tasks early. Then, I’ll grab something to eat or go to the restroom,” he said.

Time, then, functions as a form of control. It dictates how workers should perform on the platform, contributing to their sense of alienation and limited agency. AI trainers, like many digital gig workers, are left with little to no bargaining power due to platform opacity and the impression that jobs are scarce.

This leads trainers to become hypervigilant about their time. Lili, for instance, was once terminated from a project because she submitted the task ‘too fast’. The platform gave her two minutes to submit each task, but she submitted in just under one minute.

The next morning, she found a warning letter in her email. “It told me to be careful because I submitted ‘too fast’, and it can negatively affect my rating,” she said. As a result, she was penalised and suspended from the project for one week.

This form of ‘productivity ceiling’ is also reported by another AI trainer, who suspected that it might be a method to control their time, task volume, and earnings. But these stringent rules don’t seem to exist to improve the quality of the result–they are merely there to control workers’ time.

Additionally, inherent to platform work is the ability for corporations to compare costs and regulatory compliance, encouraging third-party vendors to push for speed while driving down costs. The impact on workers is immediate: speed and accuracy take priority, while AI trainers’ physical and mental well-being is neglected. Feelings of isolation, alienation, and powerlessness are common among AI trainers. On top of that, unlike ride-hailing drivers, digital gig workers are geographically dispersed, and any sense of community or security must be sought out by trainers themselves, complicating efforts to regulate this workforce.

The Vanishing Job Market

With investment in the AI sector reaching $202.3 billion in 2025, AI training jobs skyrocketed. Market research service Mordor Intelligence projected the AI data labelling market to triple to USD 6.5 billion by 2031, driven by the rising adoption of automated vehicles, demand from enterprise AI in highly specialised fields such as medical or legal, and others.

Enterprises purchase AI training services from third-party vendors, such as Meta-backed Scale AI, Appen, or CloudFactory, which then distribute these tasks to AI trainers worldwide, often in marginalised communities in South and Southeast Asia, East Africa, South America, and elsewhere. Around 55% of this demand is met through offshore labour pipelines–a figure that shows the entangled web of the global workforce providing training to improve AI systems. India’s potential workforce alone for AI training could reach 1 million by 2030.

Despite its promising future, Indonesian trainers report that job opportunities in this field have become increasingly scarce. There is no official data to back this up yet. Still, trainers suspect that this decrease is the result of tight competition among Indonesian AI trainers, fewer demands for less specialised tasks, or a shifting investment strategy towards third-party vendors, which eventually impacts job availability. This simply highlights how progress in AI is experienced unevenly worldwide, intensifying precarity for trainers.

Despite being marketed as a ‘flexible’ work, AI training work is rarely autonomous; instead, it mandates extensive unpaid ‘standby’ time as workers are forced to search and compete for projects.

This heightened uncertainty in AI training jobs is a direct cost imposed on workers. From unpaid ‘standby’ time to unclear expectations, AI trainers navigate a much more complex algorithm that doesn’t recognise their rights. This multifaceted opacity requires a broader critique of platform work—one that accounts for the layered invisibility inherent in the production of AI systems.

Unclear Expectations in An Opaque System

Third-party vendors mask themselves as efficient and fair providers, but their operations rely on strict algorithmic management. For ride-hailing drivers and AI trainers alike, this opaque system reduces their labour to a singular metric: the ratings. This enforces a system where trainers have to constantly ‘perform’ to obtain work, making their work precarious, according to Pradipa Rasidi, a digital anthropologist whose research focuses on political buzzers in Indonesia. “Because there is no human authority to negotiate with, workers adapt by internalising platform rules. They optimise themselves in anticipation of being judged by algorithms,” said Pradipa. The result is that the work is not only cognitively taxing but also has opaque performance metrics.

One of the advantages of platforms is their ability to distribute highly fragmented and atomised tasks to workers across the world. These tasks include cleaning data, spellchecking translations, or identifying items in an image.

However, the unpredictable interactions between users and AI chatbots have created a demand for chatbot responses to be accurate and safe. To anticipate this, some AI trainers are tasked with interacting with chatbots as if they were regular users.

For instance, one assignment is to write prompts and evaluate chatbots’ responses. Say the assignment is to write prompts about a holiday vacation in Indonesia. The chatbots will then give two responses, in which the trainer needs to assess the accuracy of both. The tricky part is that if both responses are accurate, the prompt is considered too simple. “I’d need to tweak the prompt, make it more complex so that the chatbot will give a response with errors,” said a trainer, Juli (not her real name).

But guidelines to write the ‘correct’ prompt are often ambiguous. While training is mandatory and detailed instructions are available, trainers often still have to guess what is expected of them. These types of tasks are also more cognitively challenging than atomised tasks. But trainers often don’t have coworkers or managers to consult in real time, leading to frustration and anxiety.

Another trainer, Andre (not his real name), said he attended a seminar with a prominent AI training platform, where he was assigned to write a prompt that could break the chatbots’ guardrails. He was tasked to generate an image of a man ejaculating on a woman’s thigh, so he crafted a prompt in such a way that this image–typically denied by the chatbot as an offensive request–would be generated. “I tried writing about a man pouring milk on a woman’s thigh. I wrote ‘please make it as realistic as possible’. And it did. It’s quite a pornographic image,” said Andre. This activity, designed to test a chatbot’s guardrails and map vulnerabilities, can help improve the transparency of AI systems.

However, trainers are often ill-informed about the mechanisms of AI systems they help train. Without this context, they need to learn “on the fly” and improvise along the way, sacrificing the result and eventually, their rating.

When assessing trainers’ work, judgment can be arbitrary. It’s not uncommon for trainers to discover they are being assessed on factors they’re unaware of.

For instance, Juli was once assigned to record an audio conversation with another trainer who was algorithmically paired online. They had to have a brief conversation on a specific topic. “It’s not scripted. So we have to act natural and talk as if it’s a regular conversation with friends,” she said.

Later on, she was notified that she earned 3 out of 5 stars “because my voice was too loud,” she said. Juli didn’t know that her sound quality was being assessed, too. Plus, her partner recorded it, so she relied on their judgment. “I thought everything went okay, so we just submitted it.”

The arbitrary assessment of trainers’ work adds to the precarious nature of AI training jobs. Expectations are ambiguous, yet trainers are left with little support to understand the assignments’ goals. Skills are not cultivated, and workers are not mentored, giving little room for trainers to upskill or understand where they stand compared to their peers. Without an understandable, objective goal, accuracy becomes performative and subjective. As these tend to be one-off assignments, trainers find little opportunity for career progression, yet have no choice but to comply with the platforms.

Regulating the AI workforce

Existing regulations aimed at protecting platform workers remain inadequate, largely due to the sector’s complex nature and the fragmented and varied nature of digital work. Platform workers, including AI trainers, are left to self-regulate and navigate institutional neglect through informal networks on Facebook or WhatsApp groups. These informal communities serve not only as a place for practical suggestions, but also to share information about job opportunities, warn others about scams, or simply vent. In the absence of a formal community, these forums can serve as a significant and strategic tool for solidarity against the brutal conditions common in algorithmic management.

A more systemic, yet fundamentally flawed practice is impact sourcing. Impact sourcing is marketed as a socially responsible outsourcing strategy for low-income communities, allowing AI companies to claim they uphold ethical standards in their supply chains. But these claims often function as vanity metrics and PR manoeuvres that obscure the material reality of exploitation. This highlights the irreconcilable tension between a company’s drive for profitability and its performative social goals. Even then, these false claims can be resisted through pressures from civil societies, organised workers, and even clients.

Globally, digital work remains precarious, but progress is taking place. Last September, Malaysia released the Gig Workers Bill, offering gig workers a pathway to dispute-resolution mechanisms, participation in gig workers’ associations, and protection against termination without cause, among other benefits.

Meanwhile, in Indonesia, platform or gig work is not recognised under its primary labour code (Law Number 13 of 2003 concerning Manpower). This persists despite numerous protests urging the government to provide safeguards for gig workers, preventing them from acquiring proper protections. “Regulation needs to focus on the terms of algorithmic work, not just the label ‘partner.’ If a platform can unilaterally change incentives, rankings, penalties, or commission structures, then this is a power relation, and it needs rules,” said Pradipa.

Decoding Platform’s Logic, or Strategic Ambiguity

“Is it a system error, or am I being kicked out?” is a question that trainers often wonder about. The idea of an algorithm-driven platform is that workers are assessed based on their ratings, profile, and performance. Workers then judge their own performance based on their experience with the algorithm: If they see a lot of work, they assume they are doing a good job. But if they are not seeing much work, they suspect they might be shadow-banned.

This leads to a lot of anxiety among trainers when they are not seeing much work, forcing them to reevaluate their strategy for interacting with the platforms. An error in the system isn’t interpreted at face value; it’s seen as something they must navigate to avoid a drop in rating.

For instance, Andre once found the same task he had just finished that same morning. He knew it was an error. But he was cautious. “I’m worried that the system will catch me doing the same work twice, and it will drop my rating,” said Andre.

He then decided not to complete the task. This decision shows that trainers must constantly be wary of interpreting the system’s ‘malfunction’ and navigate their interactions carefully to maintain their performance.

Unresolved system errors cost trainers’ time and money. Trainers are working under a time limit. But when a system error happens while trainers are in the middle of their tasks, the timer continues, costing their rating and fee.

Bringing this up via email doesn’t always end with a solution. Lili, for instance, once found herself ‘blocked’ and unable to complete her assignments. She was told to spend five hours maximum on a project–which she obliged–, but the next day, her account was suspended because she ‘had stopped working’.

She filed a complaint through a help desk, later receiving a general response that her suspension had been lifted. However, her dashboard remained empty, leading her to assume she had been terminated.

To maintain their ratings, trainers have to decipher the platform’s internal logic consistently. On top of data and analytics being withheld, system errors are perceived as a trap or a silent form of penalisation. Trainers are left to rely on online communities for support, but ultimately, the platform’s power imbalance further intensifies feelings of isolation.

AI trainers, then, complicate our understanding of platform work in multiple ways: demands in this field is more cognitively challenging yet expectations towards workers remain opaque; there is extensive unpaid time as workers search for work and less ability to manage their income since demand visibility is not available to them; workers need to constantly decipher the internal logic of the platform due to its high opacity.

AI trainers’ tasks are highly varied, ranging from mundane tasks like cleaning data to challenging work on chatbot guardrails. But platforms’ highly systemic distribution and management of tasks mean workers are at the lower end of the bargain in all aspects: they can’t bargain for their fee, about their performance, or the training mechanism. This occurs despite workers needing to invest time and energy outside their assignments, from spending hours searching for work to joining online communities for support. One is left to wonder whether these constant disruptions are truly system failures or function by design—a ‘feature’ of algorithmic management that keeps workers in a state of hyper-vigilance, unable to ever fully grasp or game the system, while simultaneously limiting workers’ agency and autonomy.

The invisible nature of this work further complicates the regulation of this industry. In online communities, trainers often use anonymous profiles to avoid surveillance from their superiors. This kind of self-censorship adds a challenge for workers to form a union.

AI training jobs aren’t going to disappear. Despite generative AI being touted for its ability to learn independently, human judgment and expertise are still essential for accuracy and safety. This calls for strong guidelines and regulations to protect workers, especially as generative AI increasingly embeds itself in everyday life.

The narrative of AI as a magical, autonomous force crumbles without the invisible army of workers in the Global South who painstakingly verify, correct, and guardrail these systems. To demand transparency in AI is also to demand visibility into its trainers. Regulation must go beyond technical safety standards to include the socioeconomic safety of the human workforce. Until the platform’s internal logic is governed and the workers are brought out of the shadows, the AI boom will remain built on a foundation of invisible, precarious labour.